Tesla’s former AI director brought Vibe Coding into the spotlight, a practice that lets programmers get AI to write code for them simply by stating their requirements in natural language—an approach once thought to level the playing field for coding. However, a paper titled How AI Shapes Skill Formation released by Anthropic, the parent company of Claude, has uncovered the drawbacks of over-reliance on AI for programming through rigorous experiments: it not only fails to boost efficiency, but also leads to the degradation of programmers’ core competencies, pouring cold water on the frenzy around this coding method.

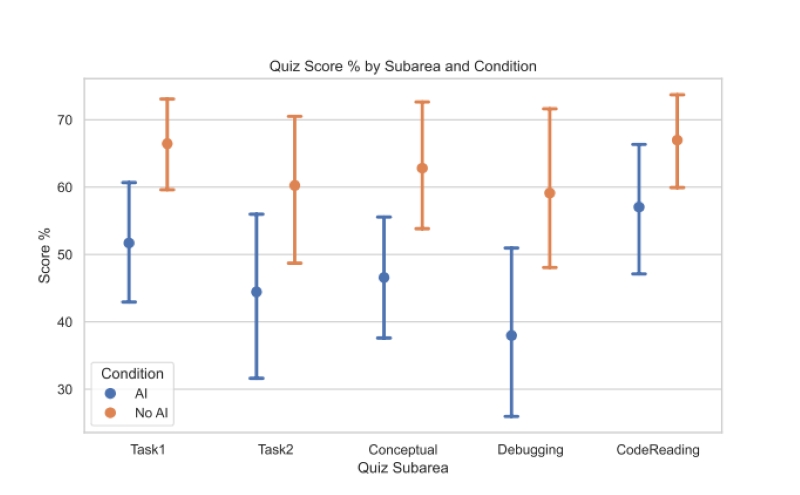

Anthropic conducted an experiment with over 50 Python programmers, tasking them with learning Trio, an obscure Python library, to complete asynchronous programming assignments. The participants were split into two groups: a manual group that only had access to official documentation and search engines, and an AI group equipped with a GPT-4o-level AI assistant. The results showed that the AI group scored an average of 17% lower on post-task assessments, with the most significant gap in debugging ability. Notably, there was no statistically significant difference in the total time taken to complete the tasks—23 minutes for the AI group versus 24.7 minutes for the manual group.

The failure of the AI group to improve efficiency stems from two key issues: The “interaction tax” and poor debugging skills. Some programmers spent a staggering 30% of their time on a single task crafting prompts to get perfect code from AI. Additionally, the AI group fell into a vicious cycle of “generate code → encounter errors → ask AI to fix them,” ultimately turning projects into unmaintainable “spaghetti code black boxes.” Meanwhile, the programmers’ brains remained in a passive “waiting for results” state, resulting in neither time savings nor knowledge acquisition.

Through screen recording analysis, the study categorized AI-using programmers into six types, each yielding vastly different learning outcomes. The “hands-off,” “give-up easily,” and “blind trial-and-error” types belonged to the low-scoring, low-competency group, with average scores below 40%. These programmers either mindlessly copied and pasted AI-generated code or abandoned independent thinking when facing problems, reduced to nothing more than “human testers” for AI. In contrast, the “inquisitive,” “pre-emptive,” and “hybrid collaboration” types achieved human-AI symbiosis, matching the performance of the manual group. They either consulted AI on concepts before coding independently, asked for underlying principles after receiving AI-generated code, or requested simultaneous explanations of code and logic—all while maintaining active cognitive engagement.

Behind this phenomenon lies the psychological principle of “cognitive offloading.” AI is like an exoskeleton: it grants immense “strength,” but long-term reliance causes the “technical muscles” to atrophy from disuse. In the experiment, the manual group encountered an average of three errors per person, building deep cognitive representations through independent problem-solving. The AI group, however, faced only one error on average; the seemingly smooth experience deprived them of the critical “friction” necessary for skill development. More importantly, regardless of whether programmers had 1–3 years, 4–6 years, or over 7 years of experience, those who over-relied on AI performed worse than the manual group. AI equally “dampens” the brains of all who seek to cut corners, creating an illusion of “happy ignorance”—programmers feel confident while coding, yet are left helpless when facing real-world problems. In this fast-evolving tech landscape, staying updated with the latest AI news is essential, but it is even more crucial to distinguish between leveraging AI as a tool and surrendering to it as a crutch.

Anthropic’s research does not negate the value of AI in programming; instead, it offers a survival guide for the AI era. Programmers should treat AI as a mentor, not an intern—prioritize asking about principles over delegating tasks, review AI-generated code line by line, and analyze bugs independently before seeking AI assistance. AI can enhance programming efficiency, but only if programmers master core competencies first. Only by upholding the bottom line of independent thinking can they avoid being eliminated in the age of human-AI symbiosis.